Audio Analysis

Spotify takes into account the raw audio of songs and dissects them to analyze it. It derives insights in terms of audio characteristics such as key, tempo, mood, time signature, etc.

This is done with the help of Convolutional Neural Networks.

Below is an excerpt from How Does Spotify Know You So Well? explaining CNNs in Spotify:

Convolutional neural networks are the same technology used in facial recognition software. In Spotify’s case, they’ve been modified for use on audio data instead of pixels. Here’s an example of a neural network architecture:

This particular neural network has four convolutional layers, seen as the thick bars on the left, and three dense layers, seen as the more narrow bars on the right. The inputs are time-frequency representations of audio frames, which are then concatenated, or linked together, to form the spectrogram.

The audio frames go through these convolutional layers, and after passing through the last one, you can see a “global temporal pooling” layer, which pools across the entire time axis, effectively computing statistics of the learned features across the time of the song.

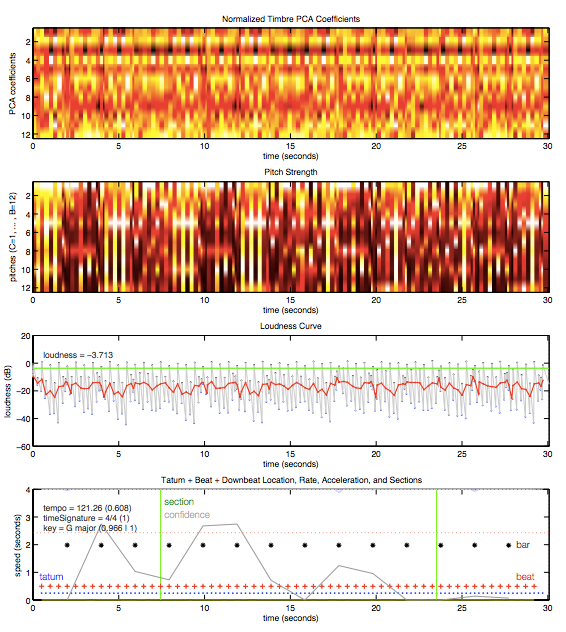

After processing, the neural network spits out an understanding of the song, including characteristics like estimated time signature, key, mode, tempo, and loudness. Below is a plot of data for a 30-second snippet of “Around the World” by Daft Punk.

Ultimately, this reading of the song’s key characteristics allows Spotify to understand fundamental similarities between songs and therefore which users might enjoy them, based on their own listening history.

Last updated